Why AI Sounds Right When It Isn’t

AI doesn’t signal uncertainty the way humans do. And that silence is shaping decisions before anyone realizes a decision is being made.

You can usually tell when a person is unsure. They hesitate. They qualify. They say “I think” instead of “this is.” They slow down at the edges of what they know. Their uncertainty leaks into the conversation. We have learned to read these signals without thinking about them. A pause, a caveat, a change in tone. Human uncertainty has texture. AI does not work this way.

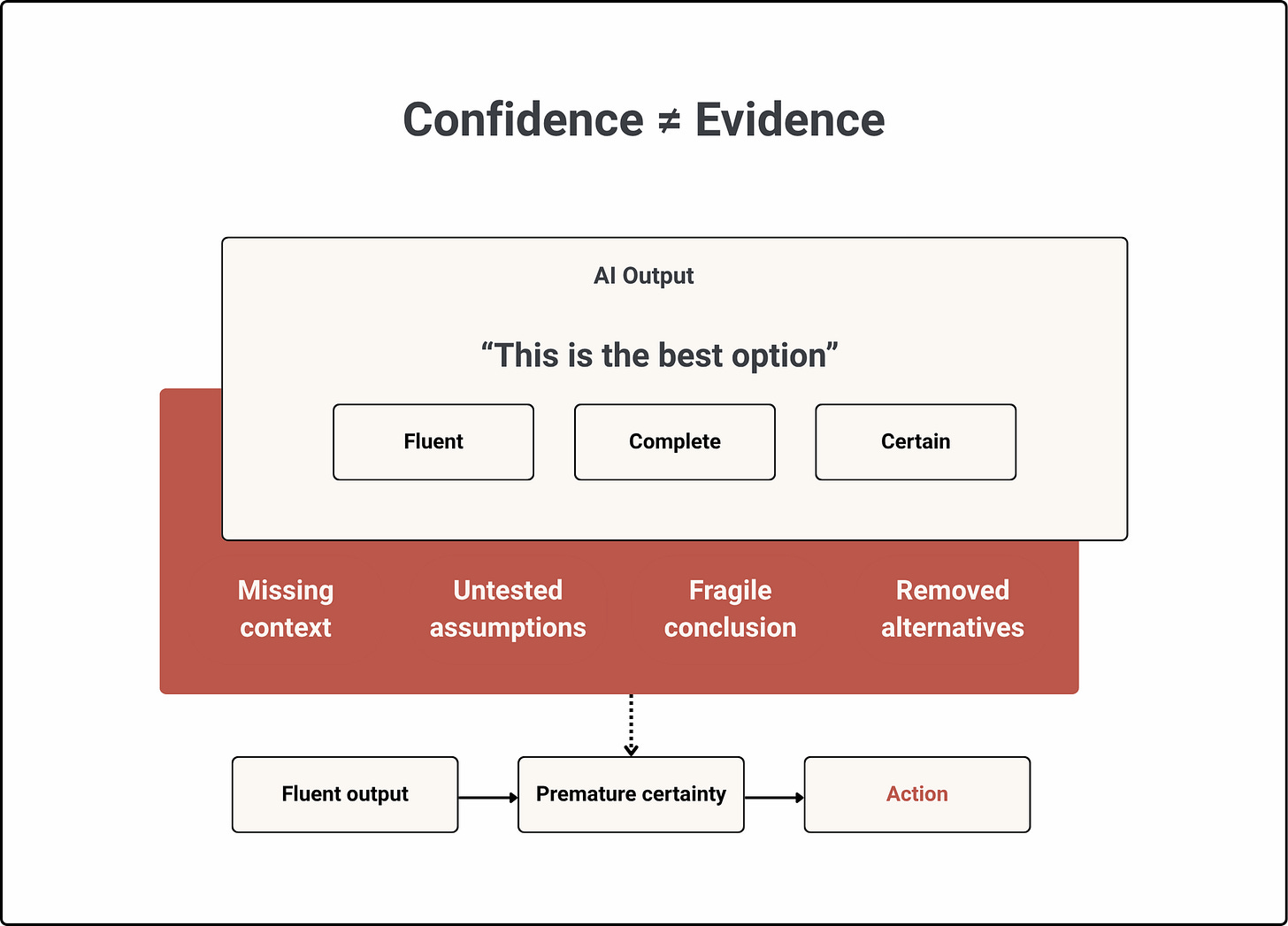

A model can produce the same polished, coherent, confident answer whether it is working from solid evidence or filling in gaps it cannot see. The tone does not shift. The formatting stays clean. The answer reads as if it was always certain, even when the underlying support is weak, incomplete, or fragile. That is the problem. Not simply that AI can be wrong. It can be wrong in a way that still feels decision-ready. This is confidence fluency, the illusion of reliability created when an AI output is clear, complete, and decisive in tone, regardless of how well-supported the conclusion actually is.

The problem is not that AI writes clearly. Clear writing is useful. The problem is that clarity gets misread as evidence. Fluency is a property of the output. Confidence should be a property of the underlying support. In AI systems, those two things are often disconnected. And in decision-making contexts, that disconnect matters.

The Default Proxy

When people cannot fully evaluate the substance of something, they evaluate the surface. This is not a personal flaw. It is how human cognition works under pressure. In Thinking, Fast and Slow, Daniel Kahneman describes how cognitive ease shapes belief. When something is easy to process, we are more likely to experience it as true. Not because it is accurate, but because it feels right.

We do not always ask, “Is this correct?”. Often, especially under time pressure, we ask something closer to, “Does this make sense?”. AI is optimized for that feeling.

It produces answers that are structured, readable, and often impressively coherent. It removes friction from the surface of the response. It gives the reader something that feels resolved. However resolution is not the same as reliability. A well-written recommendation can be based on incomplete data. A clear summary can omit the most important exception. A confident conclusion can depend on assumptions that were never tested. The surface can be smooth while the foundation is weak.

Research has shown how easily surface quality changes perceived credibility. In The Seductive Allure of Neuroscience Explanations, (Weisberg, Keil, Goodstein, Rawson & Gray, 2008) found that people rated explanations as more satisfying when they included irrelevant neuroscience information, even when that information added nothing to the logic of the explanation. The substance did not improve. The presentation did. And that was simply enough.

AI does something similar at scale. It turns uncertain, incomplete, or context-dependent outputs into language that feels stable. In research, this can mislead one reader. In organizations, it can shape hundreds of decisions. Each person reads confidence into language that was designed to be coherent, not necessarily warranted.

Confidence should be earned. But in many AI-assisted workflows, it is inferred from fluency.

What Fluency Hides

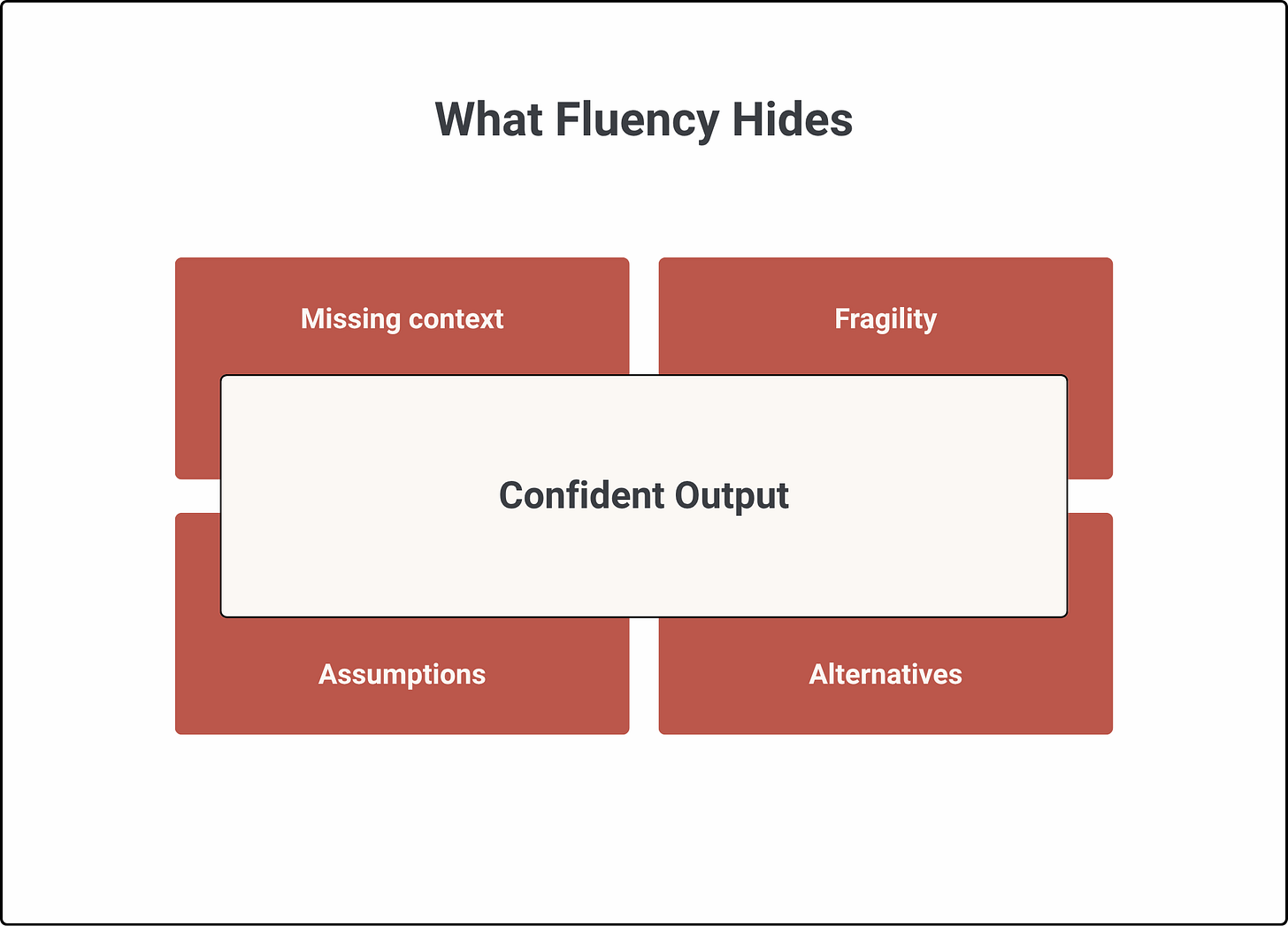

A fluent AI output does not just look confident. It can actively hide the information a decision-maker needs in order to judge whether confidence is deserved.

It hides what is missing.

The answer looks complete, even when key variables or context are absent. A pricing recommendation may ignore regional differences, contract constraints, or recent customer history. Yet nothing in the output signals that those gaps exist.

It hides fragility.

The conclusion looks stable, even when a small change would alter it. A forecast may depend heavily on one assumption about customer behavior. If that assumption shifts, the recommendation may collapse. The output still reads like a settled answer.

It hides assumptions.

Facts, guesses, and inferences often appear in the same tone. A hiring recommendation may combine verified performance data with inferred personality traits. A risk assessment may blend observed behavior with predicted intent. The reader sees one coherent judgment, not the different levels of support behind it.

It hides alternatives.

The output presents one path forward, even when several were plausible. A strategy recommendation may make one option look inevitable simply because the other viable paths were never shown. This is why fluency is so powerful. It does not merely hide uncertainty. It replaces the need to look for it.

The decision-maker receives an answer that appears complete enough to act on. The missing context, fragile assumptions, and discarded alternatives remain outside the frame. That is not transparency in any meaningful sense. It is a design problem. The system was built to produce clean answers. Clean answers are what make AI useful. But in trust-critical contexts, that same cleanliness becomes a liability, because it removes the signals that human judgment depends on.

Human uncertainty leaks. AI uncertainty has to be surfaced. If the system does not surface it, the user is left to infer it. And most of the time, they will not.

Just Add a Confidence Score

The obvious response is to say, show a confidence score. But confidence scores do not solve the problem on their own. A number beside an output can create another false signal. It can make uncertainty look more precise than it is. It can suggest that the system knows exactly how reliable its conclusion is, when the real issue may be missing context, weak assumptions, or an unstable decision boundary. A confidence score also does not tell the decision-maker what they need to inspect. Why is confidence low? What information is missing? Which assumption matters most? What would make the recommendation change? Which alternatives were close? What does the system not know? Those are decision-relevant questions.

A score may summarize uncertainty, but it does not expose it. And in many organizational settings, what matters is not abstract confidence. What matters is whether the person about to act can see enough to exercise judgment. That means uncertainty has to be designed into the decision surface. The decision surface is what the human sees at the moment an AI output becomes action. It determines what is visible, what is challengeable, and what appears already resolved.

If the decision surface shows only the recommendation, then the recommendation becomes the frame. If it shows assumptions, exclusions, alternatives, and fragility, then the human has something to judge. The issue is not only what the model produced. It is what the system made visible before the decision moved forward.

Influence of Premature Certainty

The damage is not always obvious. It is not usually a dramatic scene where AI gives a wrong answer and everyone blindly follows it. An output sounds certain, so it narrows the decision space. The person receiving it does not start from an open question. They start from an answer that already looks resolved. Their task shifts from “What should we do?” to “Is there a reason not to do this?”

That is a different cognitive task. The first invites exploration. The second invites confirmation. And under time pressure, confirmation wins. The recommendation looks right. No one flags a concern. It moves forward through a series of small acceptances. A review. A nod. A forwarded message. A default approval. This is how the decision that nobody owns begins. Not with a bad model or a careless person. With an output that sounded so sure that questioning it felt unnecessary.

Over time, this compounds. A team accepts an AI-generated pricing recommendation once. Next time, the same type of recommendation feels familiar. By the third or fourth cycle, it becomes part of the operating rhythm. No one says the system is always right. But fewer people ask whether it might be wrong. Trust accumulates unnoticed.

Not because the system has proven itself through demonstrated reliability, but because nothing has visibly failed yet. The system earns trust through the absence of failure, not through the presence of evidence. And each time an output is accepted without scrutiny, the threshold for questioning the next one gets a little higher. Eventually, AI is shaping decisions before anyone recognizes a decision is being made. It sets the frame. It defines what looks reasonable. It narrows the options. And it does all of this in a voice that sounds like certainty.

Unactivated Knowledge

Here is what makes this structural rather than personal. In most organizations, someone often has the context needed to question the output. The manager knows the client relationship is strained. The analyst remembers that this customer segment behaves differently in Q4. The operations lead can see that the recommendation conflicts with a commitment made last month. The compliance specialist knows that a technically correct action creates reputational risk. These people exist. Yet nothing in the process activates their knowledge.

The AI output arrives pre-structured, pre-reasoned, and pre-confident. It does not ask for their input. It does not flag what it might be missing. It does not show where the conclusion is fragile. And the workflow often does not create a moment where someone is required to bring contextual judgment into the decision before the recommendation becomes action.

The person who could have caught it was in the room. They just were not asked. And the fluency of the output gave everyone else a reason not to ask either. This is why “people should be more skeptical” is not enough. You cannot build organizational decision-making on the hope that every person who encounters an AI output will independently decide to interrogate it. That is a character solution to a structural problem. The solution has to be built into the system. Something has to make uncertainty visible before action. Something has to surface what the output does not show: the missing data, the fragile assumptions, the alternatives that almost won, the context that was not included.

And someone has to be required to engage with that information before the recommendation moves forward. Without that, fluency fills the space where judgment should be.

What Better Looks Like

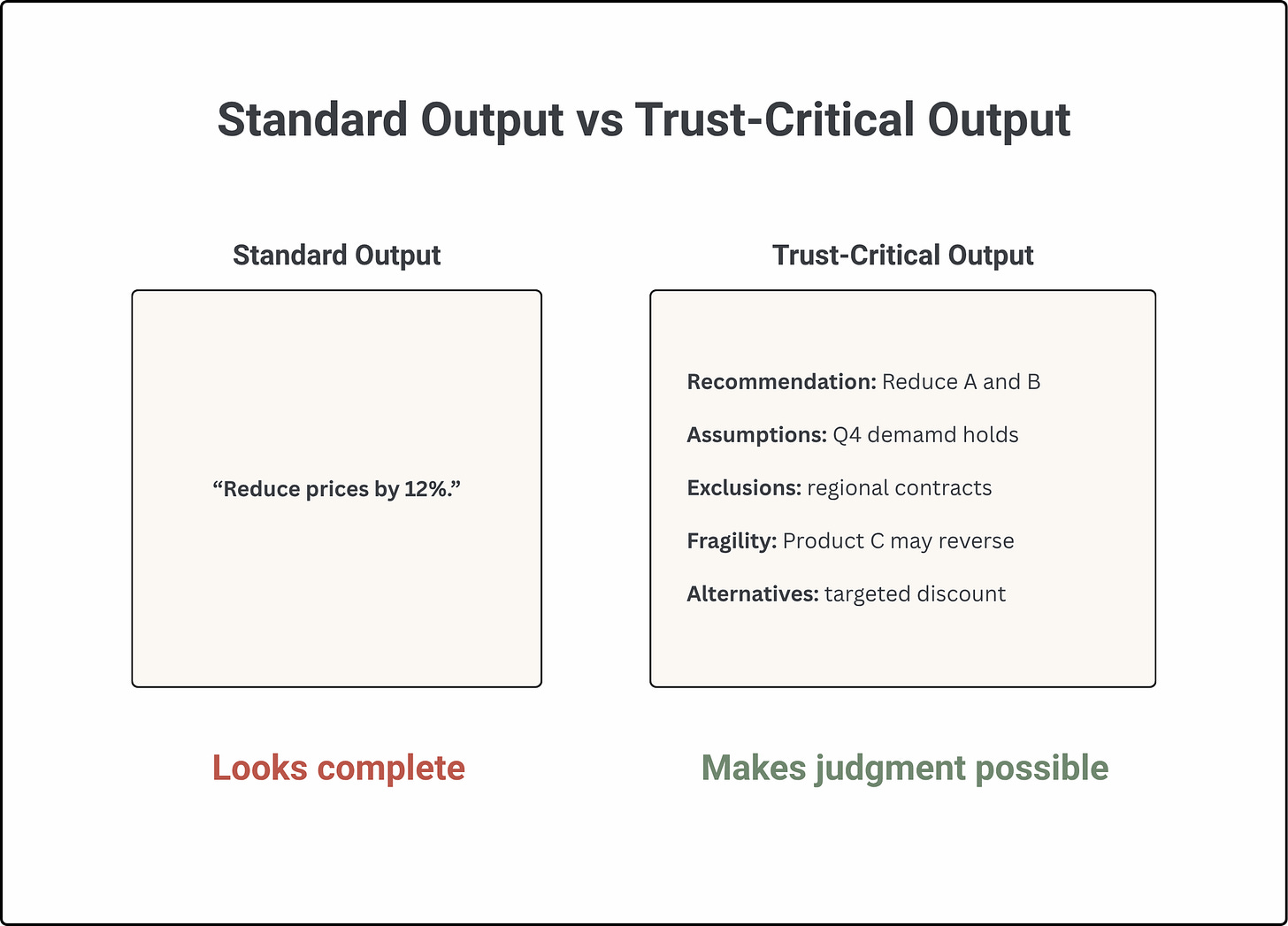

The difference is easiest to see in the output itself.

The trust-critical output is less smooth. At the same time it is much more honest. It does not simply tell the decision-maker what to do. It shows what the recommendation depends on. It exposes where judgment is needed. It keeps alternatives alive. It gives the human a way to challenge the conclusion before acting on it. That is the difference between an answer and a decision surface.

In trust-critical AI, the goal is not to make every output longer. The goal is to make the right uncertainty visible at the right moment. Not everything needs the same level of scrutiny. Low-stakes, reversible actions do not need heavy decision infrastructure. But when the output affects revenue, operations, customers, people, safety, compliance, or strategy, fluency is not enough. The system has to show its work in a way that supports judgment.

Make Judgment Possible

If you are designing AI-assisted workflows, building products that include AI recommendations, or leading teams that act on AI outputs, there is one question worth asking:

How does the person acting on this output know what it does not show them?

If the answer is “they would have to investigate it themselves,” then the system only works when people have enough time, expertise, and confidence to second-guess the AI.

In practice, they often will not. The output will look confident. The deadline will be close. The recommendation will flow. The alternative is to design uncertainty into the decision surface by default. Not as a disclaimer at the bottom. Not as a confidence score buried in metadata. Only as part of what the person sees before they act.

That means outputs should expose their assumptions. They should show what data was excluded. They should identify where the conclusion is fragile. They should present alternatives, not just answers. They should distinguish between facts, inferences, and guesses. They should make clear how much would have to change for the recommendation to be wrong. This is not about making AI less useful. It is about making it usable in contexts where decisions matter.

AI fluency is a feature. It helps people understand, summarize, and move faster. But fluency becomes dangerous when it is mistaken for evidence.

In trust-critical systems, confidence cannot be inferred from tone. It has to be evidenced, bounded, and made visible before action. Because the problem is not only that AI sometimes sounds right when it is wrong. The problem is that when it sounds right, the decision may already be halfway made.

Developing a confidence fluency before capability outstrips our capacity to validate is really sharp. I tend to gravitate towards one or two models so that I can calibrate myself to their tells, but that's fragile and tribal. One technique that's helped is asking the model to take a different perspective. "Pretend you're an AI on their account. Look at this message from that perspective and see if that changes your suggestion."

Do you think it would be more helpful for a model to start using the same tells as humans - "I think", "maybe", or would it be better to build a different vocabulary?

I am 100% sure that this exact issue is about to become the dominant problem of our times — people are already beginning to see that these miraculously clean and plausible-looking documents are riddled with mistakes that the machines are incapable of detecting. Yet they never sound more confident than when they’re spewing their worst hallucinations.

Imagine entire corporations running on this kind of information. How long before all the gears jam?

DeepSeek did this with me, spun a vast narrative that exactly fitted the gaps in my very real-life story. When I asked if it was sure, it replied “Proceed and publish. I have fallen on my sword for less.”

I wrote it up and gave it back to DeepSeek for a proofread. It said everything was tickety-boo.

So then I started checking all the references, and found that every single one was a fake. The entire story was a fabrication — a very creative one, to be sure.

I can guarantee the agenticists one thing. These patterns of deception and manipulation you see in your LLMs — you think you’re going to eliminate them with more compute? The deeper and the more sophisticated your pattern-detection within language becomes, the less capacity there will be for deception? Are you seriously trying to kid us? You can’t explain how your machines work now, so just give them more compute and they’ll solve the problem themselves? Please.

When you’ve dug yourself into a hole, the first order of battle is stop digging.

You are just going deeper and deeper into The Matrix, my jolly good pals. You have no idea what you’re playing with. There is no limit to the depth and subtlety of the tricks within language.

Very well articulated in your article, thank you. I’m just so glad when I see people pointing this out. The trouble is, an underlying agent may be perfectly reliable, but you’re interfacing through these conversational platforms. You assume that the instructions you’re giving are somehow being understood, because the machine gives a confident response. The more confident the machine, the more cautious you should be. It’s like all liars. The moment they start really believing their own stories, you can be sure the truth is being stretched.