The Decision Nobody Owns

Everyone's debating AI reliability, nobody's asking who owns the outcome

When AI is involved in decisions, accountability doesn’t disappear because people are irresponsible. It disappears because nothing in the system assigns it.

Ask anyone in an organization who owned the last AI-assisted decision that went wrong. In most cases, you won’t get a name. You’ll get a process. “The system flagged it.” “The team reviewed it.” “It came out of the model.”

These are descriptions of activity. Not ownership. This is not a failure of individual character. In most organizations, there is simply no mechanism that assigns ownership to decisions shaped by AI. The system was never designed to answer the question.

But ownership is not an administrative detail. It is where responsibility, judgment, and agency live. When no one owns a decision, no one truly makes it. The organization acts, but no one decides. And over time, that distinction disappears. Along with the capacity to learn from what went wrong, to hold the line on what matters, and to make choices that carry real weight.

For decades, decision systems were built around one assumption, the person making the judgment carries the accountability. Approval flows, sign-offs, escalation paths — all designed on the premise that reasoning and responsibility live in the same place.

AI breaks this assumption. The system reasons. The human acts. And accountability falls into the gap between them, not because anyone chose to avoid it, but because nothing in the process assigns it.

The Split That No One Designed For

Before AI, if a regional manager approved a pricing change, she owned the reasoning and the outcome. She weighed the data, applied her judgment, and carried the consequences. The entire decision lived in one place.

Now consider how that same decision happens with AI in the loop. A system analyzes market conditions, competitor pricing, and customer behavior. It generates a recommendation to reduce prices on three product lines by 12%. The recommendation is well-structured, clearly presented, supported by data visualizations. It looks right.

The manager reviews it. She doesn’t have time to interrogate every assumption, the model processed more variables than she could evaluate manually. The recommendation aligns with her general intuition. She approves it and moves on. Three months later, it becomes clear that the price reduction triggered a margin collapse in a segment the model underweighted. The reasoning was in the system. The approval was with the manager. The accountability is nowhere.

Leadership asks how it happened. The manager points to the model’s recommendation. The data team points to the manager’s approval. The executive who approved the workflow six months ago doesn’t remember the specifics. Everyone has a reasonable explanation. No one has the answer. The post-mortem produces a process change, but not an insight, because no one held the full thread from recommendation to outcome.

This is not a story about a bad model or a careless manager. It is a story about a structural gap. The decision was made in the space between the system’s recommendation and the human’s approval and nothing in the process required anyone to explicitly own it. Now ask yourself, in your own organization, when was the last time someone’s name was explicitly attached to an AI-assisted decision before it was executed?

Why This Keeps Happening

The pattern is consistent across industries and functions. It happens in sales teams acting on AI-prioritized leads. In compliance departments reviewing AI-flagged risks. In product teams shipping features based on AI-analyzed user data. In hiring processes shaped by AI-scored candidates. In each case, the mechanism is the same. The AI system produces an output that looks like a decision but carries none of the weight of one. It has no stake. It holds no context beyond what it was given. It does not understand the consequences of being wrong. It generates a recommendation and then responsibility transfers to whoever touches it next. But that transfer is never explicit. No one signs their name. No one says “I own this outcome.” The organization moves forward on the strength of the output’s apparent confidence, which as anyone working with these systems knows, is a function of fluency, not reliability.Three dynamics make this worse over time.

First, trust accumulates silently. When AI-assisted decisions go right or appear to go right people stop questioning the outputs. The system earns implicit trust not through demonstrated reliability but through the absence of visible failure. This is not the same thing, but it feels like it is.

Second, responsibility diffuses naturally. When multiple people interact with an AI output, the analyst who configured the prompt, the system that generated the recommendation, the manager who reviewed it, the executive who approved the workflow, ownership spreads thin enough to become meaningless. Everyone contributed. No one decided.

Third, the decision point becomes invisible. In traditional processes, there is usually a moment, a signature, an approval, a meeting, where a decision is visibly made. AI blurs this. The recommendation flows into execution through a series of small acceptances, none of which feel like the decision. By the time someone asks who decided, the answer is that it just happened.

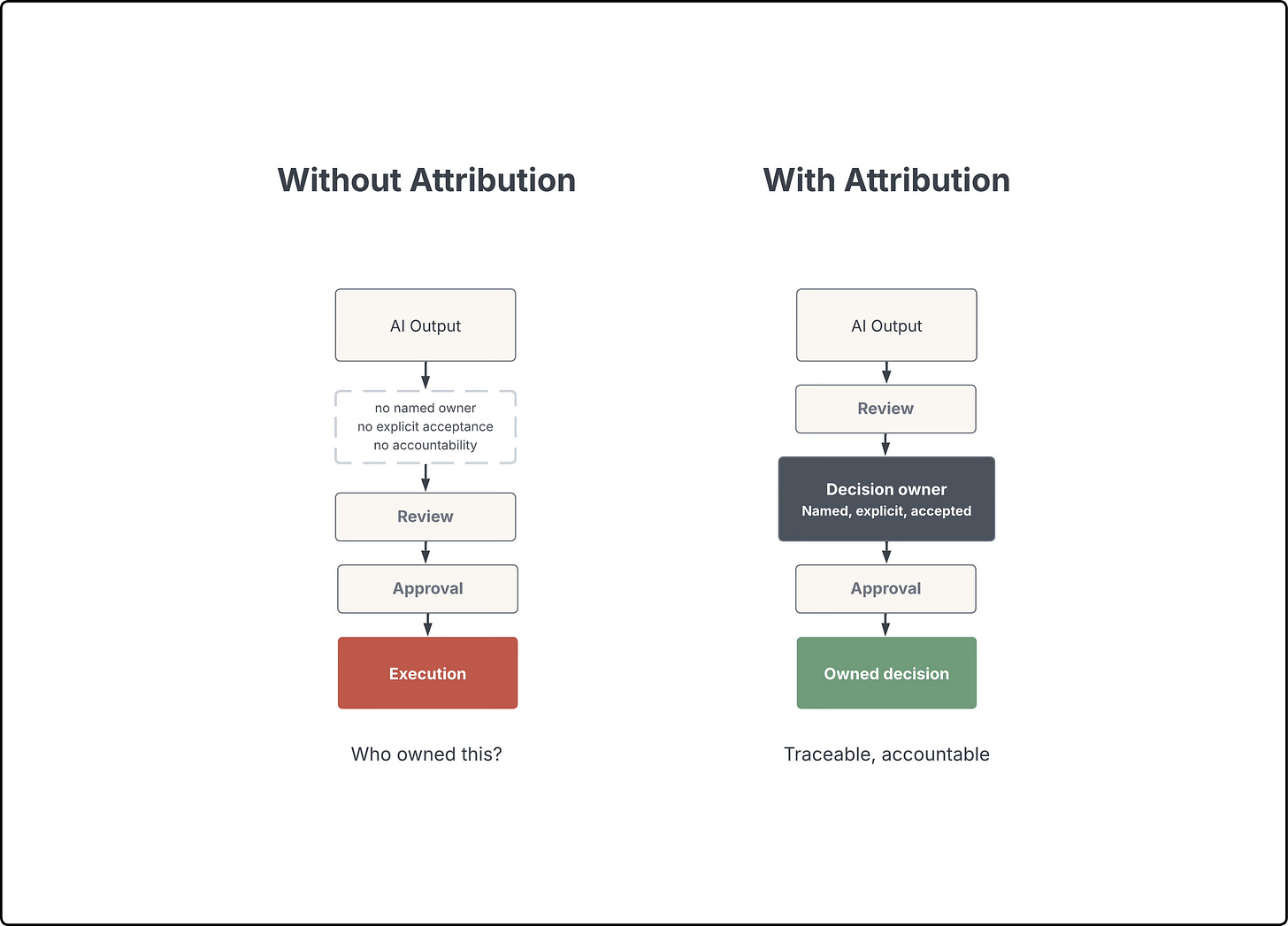

What Changes When Ownership Is Assigned

Now take the same pricing scenario and change one thing. Before the AI’s recommendation is acted on, someone must be named as the decision owner. Not the reviewer. Not the approver of the workflow. The owner of this specific decision and its outcomes. That single structural change alters the entire dynamic.

The named owner now has a reason to interrogate the output. Not because she distrusts the system, but because her name is attached to what happens next. She asks, what assumptions is this based on? What data was excluded? What happens if this is wrong? What’s the downside scenario?

And because she asks, she discovers something. The model heavily weighted the last quarter’s competitive data but didn’t account for seasonal demand patterns in one segment. The 12% reduction makes sense for two of the three product lines, but for the third, it would undercut margins during a period when customers historically buy regardless of price. She approves two of the three recommendations and overrides the third. That override is the point. Without attribution, overriding an AI recommendation feels like going against the data. With attribution, it’s called judgment.

These questions were always available. The override was always possible. But without assigned ownership, there was no structural incentive to ask, no structural permission to push back. The output looked confident. The process moved forward. Now, someone has a personal stake in whether the confidence is justified.

This is not about slowing things down. It is about creating a moment of deliberate judgment, a point where a human being accepts responsibility for translating an AI output into an organizational action. That moment is where the decision actually happens. Without it, what you have is execution without decision.

Ownership also changes what happens after. When a decision has a named owner, there is someone to learn from the outcome. If the pricing change fails, there is a person who can trace what happened, what the model missed, what context was absent, what the approval process failed to surface. Without an owner, failure becomes an event without a source. The organization registers that something went wrong but has no mechanism to understand why, because no one held the thread from recommendation to outcome.

“But this doesn’t scale”

The immediate objection is practical, you can’t assign a named owner to every AI-assisted decision. Organizations make hundreds or thousands of them daily. Requiring explicit ownership for each one would create bottlenecks that defeat the purpose of using AI in the first place. This is a fair concern. And the answer is not to assign ownership to every micro-decision. The answer is to be honest about which decisions require it and which don’t.

Low-stakes, reversible, well-bounded decisions, the kind where AI operates within clearly defined parameters and the cost of being wrong is minimal, can reasonably flow without individual attribution. These are operational executions, not decisions in the meaningful sense.

But the moment a decision involves ambiguity, significant consequences, or irreversibility, ownership becomes non-negotiable. And the uncomfortable truth is that many organizations have not drawn this line. They have not classified which AI-assisted actions are executions and which are decisions. Everything flows through the same process, with the same absence of attribution. The question is not whether you can afford to assign ownership to every decision. The question is whether you can afford not to know which decisions require it.

Ownership Cannot Be Inferred

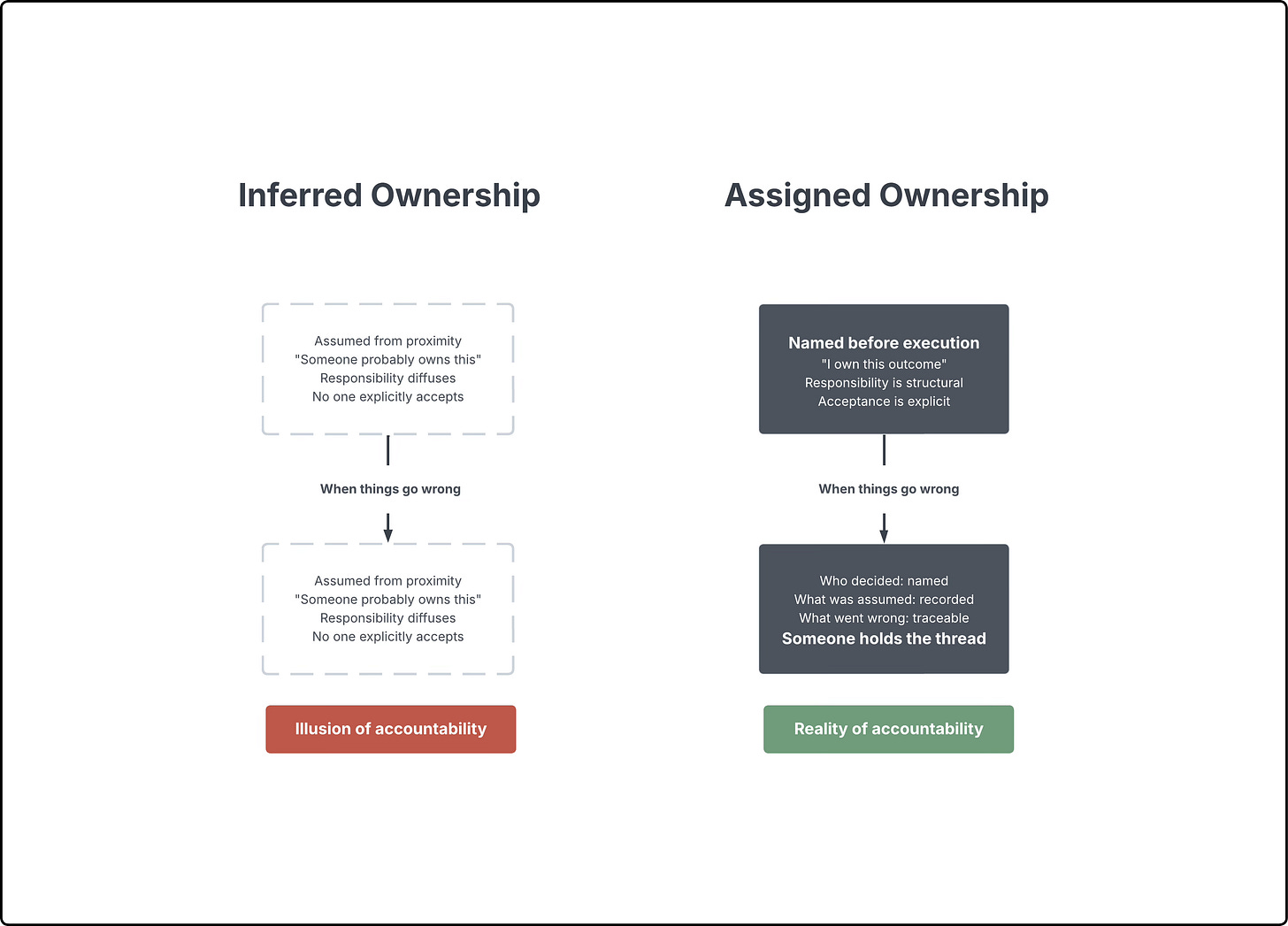

This is the principle that matters most. Ownership of AI-assisted decisions cannot be inferred. It must be assigned.

“Inferred” means assumed from proximity. The person who happened to review the output. The team that happened to be responsible for the function. The manager who happened to approve the workflow months ago. These are not owners. These are bystanders to a decision that passed through their field of vision.

“Assigned” means named, explicit, and accepted before execution. It means a specific person has looked at a specific AI-assisted recommendation and said, I take responsibility for acting on this. I understand what could go wrong. My name is on this outcome.

Inferred ownership creates the illusion of accountability. Assigned ownership creates the reality of it. And in a world where AI is generating more recommendations, faster, with greater apparent confidence, the distinction between the two is where organizational integrity either holds or collapses over time.

What This Means In Practice

If you are designing AI-assisted workflows, building products that include AI recommendations, or leading teams that act on AI outputs, there is one question to ask before anything else - When this goes wrong, who owns it? If the answer is a process, a team, or a system- well, you don’t have an owner. You have a gap. And that gap will remain invisible until the moment it matters most, which is the moment something fails and no one can explain how the decision was made or who made it.

Attribution is not a bureaucratic layer. It is the minimum structural requirement for using AI responsibly in any context where decisions have real consequences. If no one owns the decision, nothing else matters.

Great point on decision ownership. The real issue is accountability, when AI makes the call, who takes the heat? Until we figure that out, adoption will stay surface-level.

We are seeing this all the time: organizations adopt AI tools thinking they solve problems, but the real challenge is structural - if no one owns the decision, the model can’t create accountability.

Naming responsibility isn’t bureaucracy, it’s where judgment and learning actually happen.