AI Is Not Designed for Decision-Making

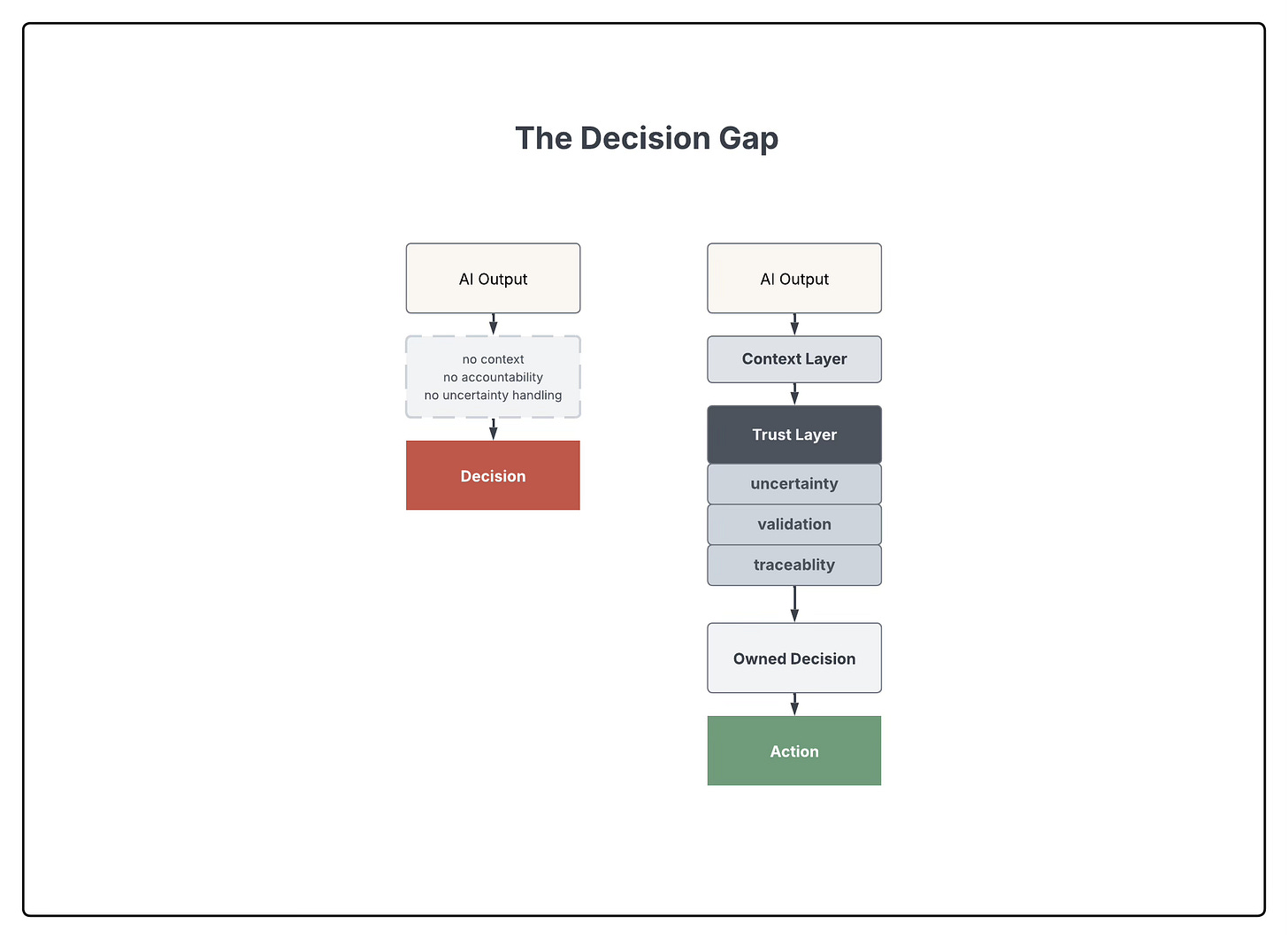

A growing gap between what AI produces and what decisions require.

AI systems are increasingly used to support decisions. They generate answers quickly, often confidently, across a wide range of contexts. This makes them useful. It also makes them easy to over-rely on. But AI systems are not designed to make decisions. And this mismatch is starting to matter.

In most organizations, decisions are contextual, ambiguous, and tied to real consequences They require judgment, trade-offs, and ownership. They often involve incomplete information, competing priorities, and uncertainty that cannot be resolved upfront.

AI systems, by contrast, produce outputs. They do not hold context beyond what is provided. They do not understand consequences. And they do not take responsibility. They generate responses.

This creates a gap between what AI produces and what decisions actually require.

Built for Answers, Not Decisions

AI systems are optimized for generating responses. They are trained to produce plausible, coherent outputs based on patterns in data. This is a powerful capability. But decisions require more than responses. They require context, accountability, traceability, and awareness of consequences. AI does not provide these by default.

In practice, this means that an AI system can produce a well-formed answer that appears correct, while lacking the information and grounding needed for a real decision. The output can be useful. But it is incomplete.

How Decisions Start to Drift

When AI is used in decision contexts, failure modes are predictable.

Outputs are treated as recommendations without sufficient validation

Confidence is inferred from fluency, not from reliability

The reasoning behind outputs is often unclear or inaccessible

Responsibility becomes diffused between system and user

These are not edge cases. They are structural properties of how current AI systems are used.

The result is not necessarily immediate failure. More often, it is subtle degradation like slightly worse decisions, increased risk, reduced clarity around ownership. Over time, these accumulate.

The Decision No One Really Made

A team uses AI to summarize customer conversations and suggest next steps for a sales opportunity. The system produces a clear recommendation: follow up with a specific offer, framed as high priority. The output is well-written, confident, and plausible. It fits the situation at a glance.

But it is based on incomplete context, missing signals from earlier interactions, and there was no awareness of internal constraints or broader strategy. The system does not know that the client had already disengaged in a previous exchange. It does not know that similar offers have recently failed. And it does not know that the account is no longer a priority.

But no one questions the output. It looks correct. And so it is executed. Later, it becomes clear that the recommendation accelerated the loss of the deal. Nothing in the system indicated uncertainty. And in the process no one explicitly owned the decision. The output was correct in form. But wrong in context.

AI Fails Without Structure

The issue is not that AI systems are fundamentally flawed. The issue is that they are being used in systems they were not designed for. We are placing output-generating systems into decision-making environments. We are treating generated responses as inputs into decisions, without designing the structure around them.

What’s missing is not a better model. It is the system in which the model operates.

In decision-making contexts, AI needs structure. Not just access. Not just speed. Structure.

This includes:

clear points of human control

explicit handling of uncertainty

defined ownership of decisions

visibility into how outputs are formed

Without this, AI introduces hidden risk. Not because it is inaccurate. But because it is easy to trust in situations where trust should be conditional.

Trust Must Be Designed

Some uses of AI are low-stakes. Others are not. In many organizational settings, decisions affect revenue, operations, people. In these contexts, errors are not just inaccuracies. They have consequences. These are trust-critical environments. In such environments, trust cannot be assumed. It must be designed.

I work on internal AI systems used in decision-making contexts. This has made one thing clear. The challenge is not generating better answers. It is designing systems in which those answers can be used responsibly. The difference is not in the model. It is in how the system structures decisions, distributes responsibility, and makes uncertainty visible. This work focuses on how AI operates inside real decision systems.

Specifically:

how decisions are structured around AI

how control and responsibility are defined

where human judgment is required

where systems break down in practice

The goal is not to optimize outputs. It is to design systems that can be trusted when decisions matter. AI is not just a tool. It is becoming part of how decisions are made. That means the problem is no longer only technical. It is systemic. And systems need to be designed for control, accountability, and trust. Not just output.

The smart data layer and current context issues cause a fair few problems at the moment with agents. If these are resolved, then I can see agents making decisions which can far out perform humans in certain areas for sure.

Those trust and uncertainty layers are doing more work than most people will appreciate right now. And the problem compounds when you factor in how volatile everything else is simultaneously: trade, institutions, what 'reliable information' even means. The gap isn't just a design problem. The ground those layers are supposed to anchor to is shifting too.